Expert Warns Artificial Intelligence Poses Grave Risk to Democracy

Rainer Mühlhoff warns that artificial intelligence threatens democracy, pointing to the U.S. where tech evangelism merges with governance and raises abuse risks.

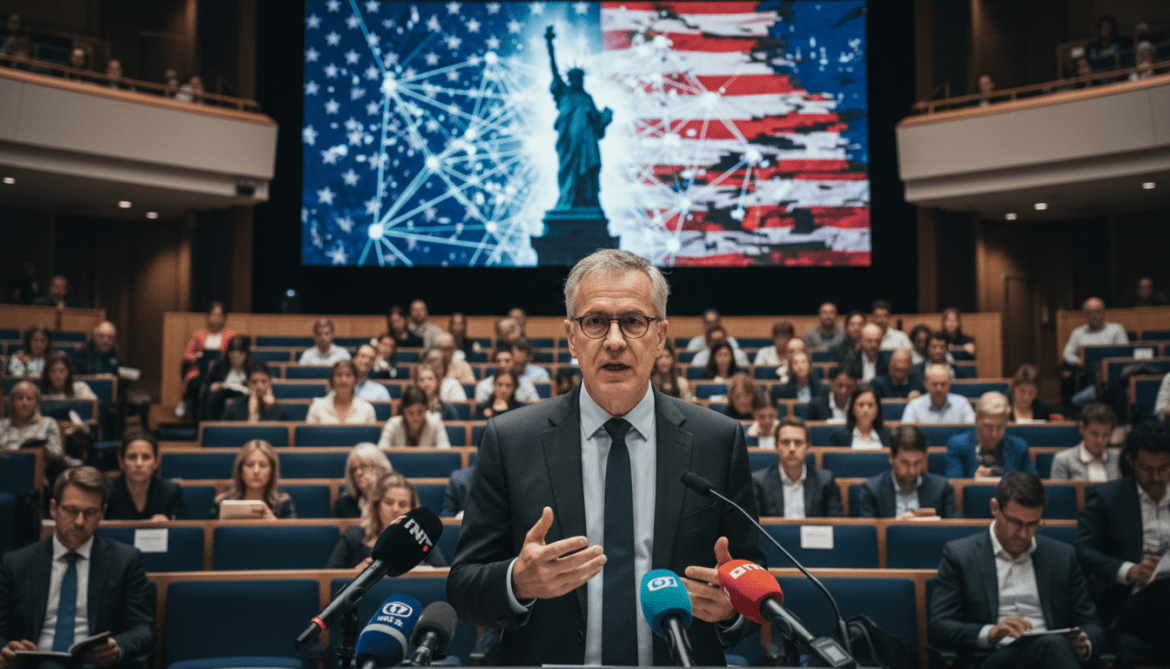

The mathematician and philosopher Rainer Mühlhoff on April 19, 2026 issued a stark warning about the threat artificial intelligence poses to democratic institutions. He argued that the combination of uncritical faith in technology and political decision-making in the United States creates conditions in which misuse is likely to flourish. Mühlhoff said the potential for concentrated control and opaque automated systems could undermine public accountability and civic debate.

Mühlhoff Frames the Threat in Ethical and Technical Terms

Mühlhoff, who works at the intersection of mathematics, philosophy and technology studies, described the danger primarily as structural rather than hypothetical. He emphasized that systems designed for efficiency or engagement can produce outcomes that erode deliberative processes and citizen oversight. According to him, the real risk lies in how those systems are embedded into institutions that make policy, enforce rules and shape public discourse.

He highlighted the importance of examining both algorithmic design and the incentives of the organizations that deploy these systems. When profit motives or political objectives align with technologies that amplify certain behaviors, the safeguards that traditionally protect democratic processes can be bypassed or weakened. That combination, he warned, makes the “danger of misuse” particularly severe.

Focus on the United States as a Case Study

Mühlhoff singled out developments in the United States as a cautionary example where technological optimism and governing intersect. He pointed to a public environment in which tech leaders, platform decisions and new communication architectures wield outsized influence on information flows and policy debates. That concentration, he said, creates vulnerabilities that foreign and domestic actors can exploit or accelerate.

Photographs of prominent technology entrepreneurs have become shorthand in public debate for this trend, illustrating how corporate leaders and social platforms increasingly shape political conversation. Mühlhoff’s concern is not limited to personalities; it extends to the institutional power that emerges when commercial platforms become central channels for news, coordination and public opinion formation.

Mechanisms by Which AI Could Undermine Democratic Norms

Experts note several pathways through which artificial intelligence can affect democratic practice, many of which Mühlhoff referenced in his analysis. Automated recommendation systems can prioritize polarizing or sensational content because such material generates engagement, skewing public attention away from deliberative information. Similarly, targeted persuasion techniques enabled by data-driven models can micro-target specific voter segments without public scrutiny.

Other risks include automated decision-making in public administration, where opaque models might determine access to services or legal outcomes without adequate transparency or appeal. The combination of surveillance capabilities, profiling and automated enforcement can disproportionately affect marginalized groups and reduce the visibility of institutional responsibility. Mühlhoff stressed that these mechanisms do not require malicious intent to cause harm; they can emerge from design choices and commercial incentives.

Gaps in Oversight and Calls for Institutional Remedies

Mühlhoff and other scholars argue that current oversight regimes lag behind technological deployment, leaving gaps regulators must urgently address. He urged stronger accountability measures, including independent audits of high-impact systems, clearer standards for transparency and safeguards for human oversight in public-sector applications. These measures aim to ensure that decisions affecting citizens remain contestable and subject to democratic control.

He also called for greater international coordination given the global reach of major platforms and AI providers. Cross-border information flows and multinational corporate structures make unilateral oversight insufficient, Mühlhoff warned, recommending that policymakers work with civil society and technical experts to create enforceable norms. Without coordinated rules, regulatory fragmentation or weak enforcement risks leaving critical vulnerabilities unaddressed.

Civic Resilience and Practical Steps Forward

Beyond regulation, Mühlhoff emphasized the role of civic institutions and media in building resilience to technology-driven distortions. Investing in public-interest journalism, improving digital literacy and strengthening fact-checking infrastructure can reduce the impact of automated amplification of false or inflammatory content. He argued that a healthy information ecosystem must combine technological safeguards with robust public goods.

Practical steps include requiring platforms to disclose algorithmic criteria for content moderation and political advertising, funding independent research into system effects, and ensuring that public agencies adopt algorithmic impact assessments. Mühlhoff suggested that transparency measures coupled with enforceable rights for affected individuals would make it harder for opaque systems to operate without consequence.

The warnings from Mühlhoff add urgency to an already active debate over the governance of artificial intelligence, particularly where private platforms intersect with public functions.

Policymakers, technologists and civic groups must confront the question of how to reconcile innovation with the preservation of democratic norms. The stakes, Mühlhoff argues, extend beyond technical failure to the very capacity of societies to hold power to account and maintain open, informed public debate.