Göttingen Team Develops Brain-Computer Interface Training That Uses Posture Signals to Control Prosthetic Hands

Göttingen scientists develop a brain-computer interface training using neural hand-posture signals to improve prosthetic hand precision for paralyzed patients.

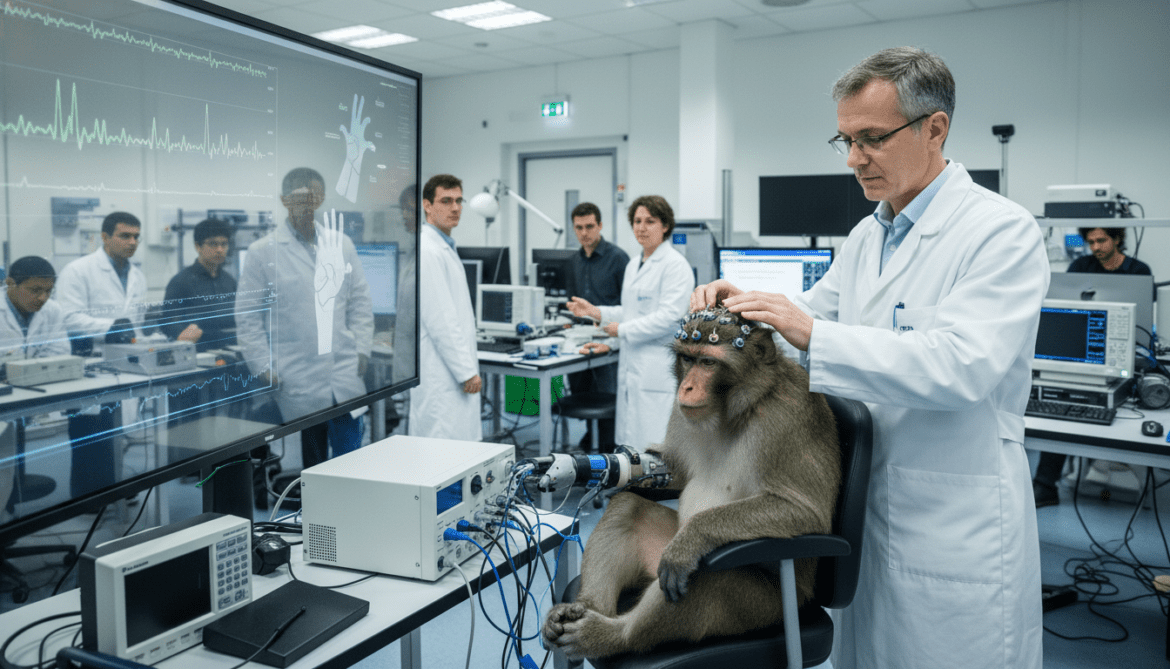

Researchers at the German Primate Center in Göttingen have unveiled a new brain-computer interface training protocol that uses neural posture signals to achieve precise control of prosthetic hands. The study, conducted with rhesus monkeys, shows that signals encoding specific hand postures, rather than movement velocity, can provide the most accurate command input for neuroprosthetic devices. The finding could reshape how clinical brain-computer interfaces are designed for people with paralysis.

Göttingen laboratory shifts focus from velocity to posture

The research team at the German Primate Center — Leibniz Institute for Primate Research reexamined long-standing assumptions about which neural signals best drive prosthetic limbs. Previous work has prioritized velocity-related neural activity to represent how fast a hand moves toward a target. The Göttingen group instead trained decoding algorithms to emphasize posture representations and the path of execution, producing markedly improved control.

The investigators argue that the choice of neural feature is central to prosthesis performance because the brain represents both how a hand is shaped and how it moves. By reframing the decoding problem to prioritize these static hand configurations, the team achieved finer, more reliable grasps in their experimental system. That perspective contrasts with decades of research that emphasized dynamic movement metrics.

Monkeys learned to operate a virtual hand by imagination

Two rhesus macaques were trained to manipulate a virtual avatar hand displayed on a screen while wearing a magnetic-sensor data glove that recorded their real hand kinematics. During initial training the animals performed grips with their own hands while watching the avatar, establishing a behavioral and neural mapping between movement and visual feedback. This preparatory phase served to align the animals’ motor intent with the virtual hand’s actions.

In the critical phase, the animals controlled the avatar merely by imagining grips, while researchers recorded neuronal population activity from cortical areas specialized for hand movement. The imagined control allowed the team to test whether neural posture signals alone could drive accurate prosthetic actions without peripheral movement. Performance during imagined trials approached the precision of actual hand movements recorded earlier.

Posture-related neural signals outperformed velocity signals

Comparative analyses showed that neural populations encoding hand postures carried more useful information for shaping prosthetic grasps than those tied primarily to movement speed. When decoders were tuned to posture representations, the virtual hand executed a wider variety of precise grips and maintained stable hand shapes during object interaction. In contrast, velocity-focused decoders produced less consistent postures, complicating delicate manipulations.

The results challenge the conventional emphasis on dynamic kinematics in brain-controlled prosthetics and suggest a reorientation toward the neural codes the brain uses to specify hand shape. For everyday tasks that require precision—such as buttoning, threading, or holding fragile items—stable posture control is essential, and the Göttingen findings indicate this signal class is directly usable by a BCI.

Algorithmic change: decoding the path as well as the goal

A key innovation was adapting the decoding algorithm so that it did not treat movement as a simple point-to-point transfer but accounted for the trajectory and intermediate postures the hand must assume. By incorporating the path of execution into the translation of neural activity to device motion, the protocol improved the smoothness and accuracy of prosthetic grasps. Researchers described this shift as making the decoder sensitive to both destination and the route taken to reach it.

When the team compared avatar movements driven by the new protocol against the original recorded movements of the monkeys’ real hands, the virtual actions matched the real-hand metrics closely. This parity suggests the approach can recreate nuanced motor strategies the nervous system employs during everyday manipulation, supporting the goal of delivering more natural and functional prosthetic control.

Implications for neuroprosthetics and people with paralysis

The study’s findings have direct implications for the design of next-generation neural hand prostheses intended for people with spinal cord injury, ALS, or other causes of paralysis. By exploiting posture-based neural signals and path-aware decoding, prosthetic devices could offer users improved fine motor control, enabling tasks that current systems struggle with. Enhanced grip variety and steadier hand configurations would expand the range of feasible daily activities.

Translating the approach from nonhuman primates to human patients will require further development, including validation with human cortical recordings and integration into wearable prosthetic hardware. Nonetheless, the conceptual shift toward posture-centric decoding provides a concrete engineering direction for teams aiming to restore hand function and independence to people living with severe motor impairments.

Funding, ethical oversight and next research steps

The project received support from the German Research Foundation through grants FOR-1847 and SFB-889, and from the European Union Horizon 2020 initiative under the B-CRATOS program (grant agreement GA 965044). Experimental protocols involving nonhuman primates were conducted under institutional oversight in accordance with applicable ethical and welfare standards. The authors emphasize responsible translation as a priority moving forward.

Planned next steps include testing the posture-based decoding approach with chronically implanted recording systems and with human participants in controlled clinical settings. Researchers also note the need to address long-term reliability, biocompatibility of neural interfaces, and regulatory pathways before broad clinical deployment is feasible.

The Göttingen study reframes a basic design choice in brain-computer interfaces, showing that tapping into posture-encoding neural activity and decoding the movement path can yield more precise prosthetic hand control and bring practical benefits closer for patients with paralysis.