Battlefield data fusion software shapes satellite analysis and targeting in Iran conflict

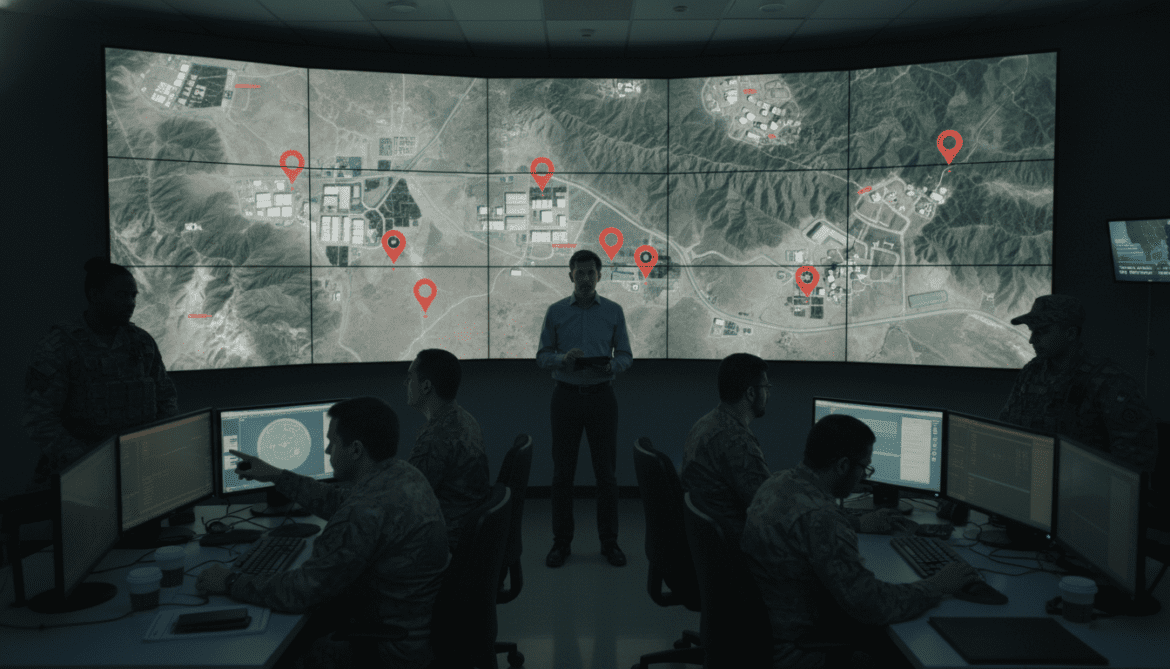

Battlefield data fusion software that links disparate databases and analyzes satellite imagery is playing an increasingly central role in operations tied to the Iran conflict, raising fresh questions about accuracy, oversight and the ethics of automated targeting. Military units and intelligence analysts are using these systems to combine signals, human reports and commercial imagery to produce recommended courses of action on the battlefield. The prominence of such software in recent satellite-image assessments has highlighted both its operational value and the risks inherent in relying on algorithmically generated recommendations.

Software aggregates databases and prioritizes possible targets

Battlefield data fusion software is designed to ingest large volumes of structured and unstructured information from multiple sources. These systems fuse logistics data, sensor feeds, open-source reporting and satellite imagery into a single analytic workspace. That consolidated picture enables operators to see correlations across datasets that would be difficult and slow to identify manually.

By applying pattern recognition and rule-based logic, the software can flag locations or activities that match pre-defined threat signatures. The tools then rank those findings by confidence level and potential operational significance. Users say this prioritization can speed decision cycles, but it also means that automated outputs exert substantial influence on human judgement.

Satellite imagery analysis has become a focal test in the Iran conflict

Recent assessments tied to the Iran conflict have underscored how satellite imagery processed by fusion systems is shaping operational decisions. Imagery analysts feed millions of pixels into algorithms that detect changes over time, identify vehicle movements and infer infrastructure use. When combined with other intelligence layers, those inferences are presented as possible targets or key insights for commanders.

Officials and analysts note that the increasing commercial availability of high-resolution satellite imagery has amplified the impact of these systems. The ability to cross-reference fresh imagery with past archives and other sensor inputs accelerates the creation of actionable intelligence. However, faster production of recommendations does not eliminate the need for careful human verification.

How analytic engines identify and recommend battlefield actions

At the core of battlefield data fusion software are analytic engines that use a mix of statistical models, machine learning classifiers and rule-driven logic. These engines tag entities—such as vehicles, buildings or personnel patterns—and assess relationships among them. Confidence scores are generated to indicate how likely each detection is to be accurate or relevant.

The recommendation layer then orders possible targets or priorities based on those scores, mission rules and user-defined criteria. Operators can drill into provenance details to see which datasets and models produced a particular recommendation. This traceability is intended to support accountability, but the complexity of some models can make full explanation challenging in time-constrained environments.

Accuracy limits, false positives and operational risk

Experts caution that no fusion system is infallible, and the Iran conflict has exposed limits tied to data quality and model assumptions. Satellite imagery can be obscured by weather, shadows or resolution constraints, and commercial imagery may lack the context that classified sensors provide. When models rely on incomplete or biased inputs, the risk of false positives increases, potentially leading to misdirected strikes or flawed assessments.

The human operators who receive system outputs can be subject to automation bias—overreliance on machine recommendations—particularly under pressure. Analysts and legal advisers warn that operational protocols must preserve critical human judgment to prevent errors from cascading into harmful consequences. Exercises and red-team reviews are being used to surface failure modes and improve safeguards.

Legal, ethical and policy concerns intensify around automated recommendations

The expanded use of battlefield data fusion software has prompted renewed calls for clearer legal and ethical frameworks. International law experts emphasize that liability and responsibility remain with humans and political authorities, not with software. Nevertheless, questions persist about how to ensure compliance with the law of armed conflict when targeting decisions are informed by algorithmic analysis.

Civil society groups and some defense officials urge transparency about the role of automated tools, audit trails for recommendations and strict rules for human review before lethal actions. Policymakers are also debating export controls and procurement standards to limit the proliferation of capabilities that could be misused in other conflicts.

Military dependence and procurement pressures push technologies forward

Pentagon and allied procurement officials face pressure to field tools that reduce uncertainty and shorten decision timelines. That dynamic has accelerated investment in commercial analytic platforms and partnerships with private vendors. The operational advantages cited include faster intelligence fusion, improved situational awareness and the ability to operate with dispersed forces.

At the same time, military planners acknowledge the need to harden systems against adversary manipulation, protect data integrity and ensure interoperability across allied networks. The rapid adoption of fusion software has outpaced some doctrinal and legal updates, sparking internal reviews aimed at aligning capability with policy and oversight.

Recent operations related to the Iran conflict have made clear that battlefield data fusion software is no longer a back-office analytic aid but a front-line enabler of action. Its growing role demands a careful balance between speed and verification, and between technological advantage and legal-ethical responsibility.

As these systems continue to be refined and deployed, commanders, policymakers and civil society will need to collaborate on standards that preserve human accountability while leveraging analytic power. The coming months are likely to bring more focused guidance on testing, oversight and operational limits as militaries reconcile technological possibilities with the realities of conflict.